Your AI agent runs every morning at 6 AM. You check the logs. Everything looks perfect. The process started, ran its course, and finished cleanly.

Except your agent didn't actually accomplish anything. No data was processed. No API calls succeeded. No tasks completed. Your scheduling infrastructure thinks everything worked because the script executed without errors. But for AI agents, execution isn't success.

This is the accountability gap that breaks agent workflows. And it's exactly why cron has no concept of success. Your agents need one.

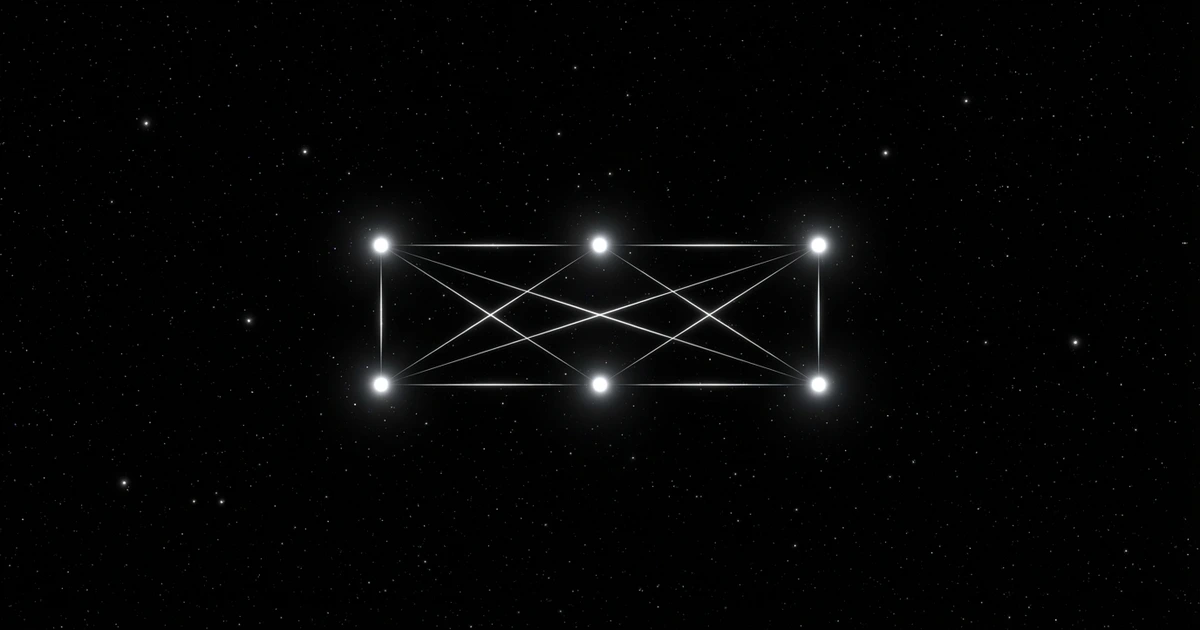

TL;DR: Cron only tracks process execution, not task outcomes. This creates an accountability gap where agents can "succeed" while accomplishing nothing. Modern AI agents need scheduling infrastructure that understands the difference between delivery vs outcome.

Key Takeaways: - Cron only tracks process execution with exit code 0, not actual task completion, creating an accountability gap where AI agents can "succeed" while accomplishing nothing - A customer support AI agent ran successfully for 3 weeks (exit code 0 every time) while processing zero tickets due to database connection issues that cron couldn't detect - Traditional Schedule → Deliver → Confirm workflows break with AI agents because cron has no visibility into whether agents processed 1,000 records or zero records - Silent failures compound over time, turning simple configuration fixes into days of debugging missing data without verified success tracking and proper accountability measures

The Silent Failure Problem

What Success Actually Means for AI Tasks

Traditional scripts either work or crash. A backup script copies files or fails with an error. A log rotation script clears old files or throws an exception.

AI agent tasks are different. Your agent might start successfully, initialize all its components, and exit cleanly while accomplishing nothing. The API it needed to call was down. The data source returned empty results. The model inference timed out.

Cron sees exit code 0 and marks it successful. You see broken workflows and confused users. This accountability gap is where agent reliability dies.

The Exit Code Illusion

Unix exit codes tell you about process execution, not task completion. Exit code 0 means "the program terminated without errors." It doesn't mean "the program did what you wanted."

For AI agents, this distinction matters. Your agent's main process might handle exceptions gracefully, log errors properly, and exit cleanly even when core functionality fails. Cron has no execution visibility into whether the agent processed 1,000 records or zero.

This is why your agent's cron job failed even when cron thinks it succeeded.

Real Costs of Missing Accountability

When Your Agent Runs But Does Nothing

Real example: A customer support AI agent was scheduled to process overnight tickets every morning at 7 AM. The cron job ran successfully for three weeks. Exit code 0 every time. Customer complaints kept increasing because tickets weren't being processed. The agent was starting, finding no new tickets due to a database connection issue, and exiting cleanly. Cron reported success. Customers reported frustration.

Silent failures compound. One missed execution becomes two. Two becomes a week of broken workflows. By the time you notice, you're debugging days of missing data instead of fixing a simple configuration issue.

AI agents often have complex dependency chains. Your agent needs the database, the API, the model endpoint, and the notification service. If any component fails gracefully, cron still reports success. Without verified success tracking, you're flying blind.

The Notification Gap

Cron can email you when jobs fail. It cannot email you when jobs succeed but accomplish nothing. This notification gap is where agent workflows break.

You need to know not just that your agent ran, but what it did. How many items processed. Which APIs called. What errors encountered. Traditional cron documentation doesn't even address this concept.

Without execution visibility, your scheduling for AI agents becomes a guessing game. You lose the accountability that makes agents trustworthy.

What Accountable Scheduling Provides

Success Confirmation vs Exit Codes

Accountable scheduling infrastructure understands the difference between execution and success. It tracks whether your agent actually accomplished its intended work.

Instead of just monitoring process exit codes, you define success criteria. "Process at least 10 records." "Call the customer API successfully." "Generate a summary report." The scheduler confirms these conditions were met, giving you verified success data.

This isn't just better monitoring. It's a fundamentally different approach to task scheduling that matches how AI agents actually work. It runs anywhere your agents do while providing the accountability cron cannot.

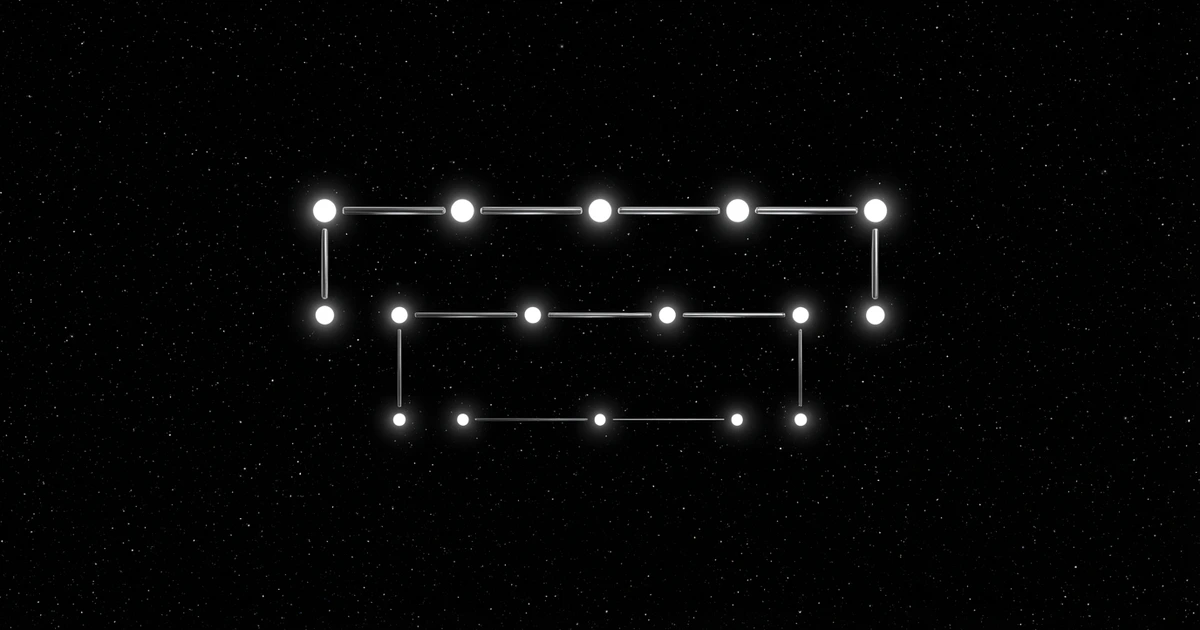

Observable Agent Workflows

Execution visibility changes how you build agents. You can see patterns. Which tasks consistently fail? When do performance issues occur? How does success rate correlate with data volume?

This data helps you optimize agent performance and catch issues before they impact users. You spend time building features instead of debugging why something didn't run.

Proper scheduling infrastructure shows you what happened, when it happened, and why it succeeded or failed. You can schedule your first agent task with confidence that you'll know the outcome.

Building Accountable Agent Infrastructure

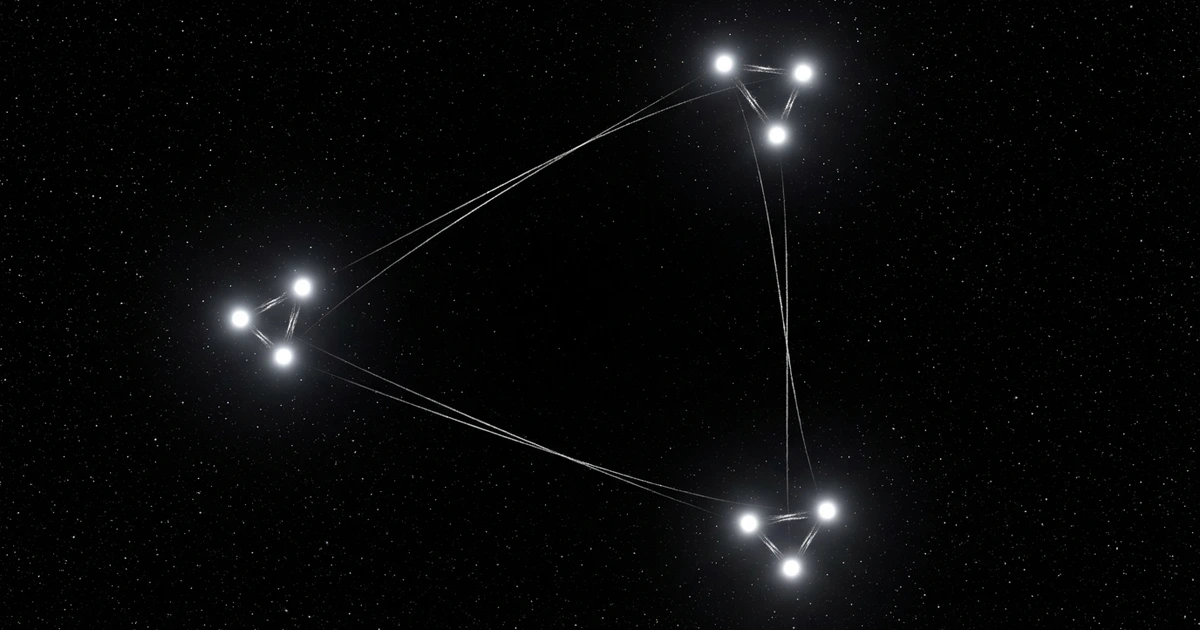

Reliable AI agents require accountable scheduling. That means infrastructure designed for the realities of AI workloads, not the assumptions of traditional batch processing.

Cron assumes binary success states. AI agents exist in a spectrum of partial successes, recoverable failures, and context-dependent outcomes. Your scheduling infrastructure should match this reality and provide the execution visibility you need.

This is also why proper scheduling prevents scenarios where AI agents go rogue. When you can see what your agents are actually doing, you can catch problems before they cascade.

The solution isn't to fix cron. The solution is to move beyond process-centric scheduling to outcome-centric scheduling. Bridge the accountability gap. Know what your agents accomplished, not just whether they started and stopped.

Make your agents accountable. Know they worked. Get on with building.

FAQ

Q: Can't I just modify my cron jobs to report success status? A: You can add logging and notifications to your scripts, but you're essentially building a scheduling system on top of cron. Purpose-built scheduling infrastructure handles this natively with better reliability and visibility.

Q: What's the difference between monitoring and scheduling infrastructure? A: Monitoring tells you what happened after the fact. Scheduling infrastructure manages execution and provides real-time visibility into success and failure conditions as they occur.

Q: How do I define success criteria for my AI agent tasks? A: Success criteria should be specific and measurable. "Process at least N items," "API response time under X seconds," or "Output file contains expected data format." Avoid vague criteria like "runs successfully."

Q: Won't this add complexity to my agent code? A: Modern scheduling platforms integrate with minimal code changes. You typically add a few API calls to report status and metrics. The complexity reduction from better visibility outweighs the integration effort.

Q: Is this overkill for simple scheduled tasks? A: For simple file operations or basic scripts, cron works fine. For AI agents with complex workflows, external dependencies, and business-critical outcomes, proper scheduling infrastructure is essential.

Make your agents accountable. Free to start.

Related Articles

- Why Your Agent's Cron Job Failed - The 3am problem

- Cron vs API Scheduling - The full comparison

- AI Agents Creating Tech Debt - The hidden cost

Frequently Asked Questions

Why doesn't cron work well for AI agents?

Cron only tracks whether a process starts and finishes without errors (exit code 0), not whether the AI agent actually accomplished its intended tasks. This creates an accountability gap where your agent can appear successful while processing zero data, making no API calls, or failing to complete any meaningful work.

What's the difference between execution success and task success?

Execution success means your script ran without crashing - it gets exit code 0 and cron marks it as successful. Task success means your AI agent actually processed the expected data, completed its workflows, and achieved the intended outcomes, regardless of whether the process executed cleanly.

How do silent failures happen with AI agents?

AI agents often handle errors gracefully, logging issues and exiting cleanly even when core functionality fails. Your agent might start successfully, encounter problems like API timeouts or empty data sources, then exit with code 0 while accomplishing nothing, leaving cron unaware of the actual failure.

What problems does the accountability gap create?

Without proper success tracking, issues compound over time as broken workflows go undetected. Simple configuration fixes turn into days of debugging missing data, customer complaints increase due to unprocessed tasks, and teams lose confidence in their AI agent reliability.

What should I use instead of cron for AI agents?

You need scheduling infrastructure that understands the difference between delivery and outcomes - systems that can verify whether your agents processed the expected number of records, completed required API calls, or achieved specific success metrics rather than just tracking process execution.

Sources

- CueAPI Documentation - Complete API reference and guides

- CueAPI Quickstart - Get your first cue running in 5 minutes

- CueAPI Worker Transport - Run agents locally without a public URL

About the Author

Govind Kavaturi is co-founder of Vector Apps Inc. and CueAPI. Previously co-founded Thena (reached $1M ARR in 12 months, backed by Lightspeed, First Round, and Pear VC, with customers including Cloudflare and Etsy). Building AI-native products with small teams and AI agents. Forbes Technology Council member.