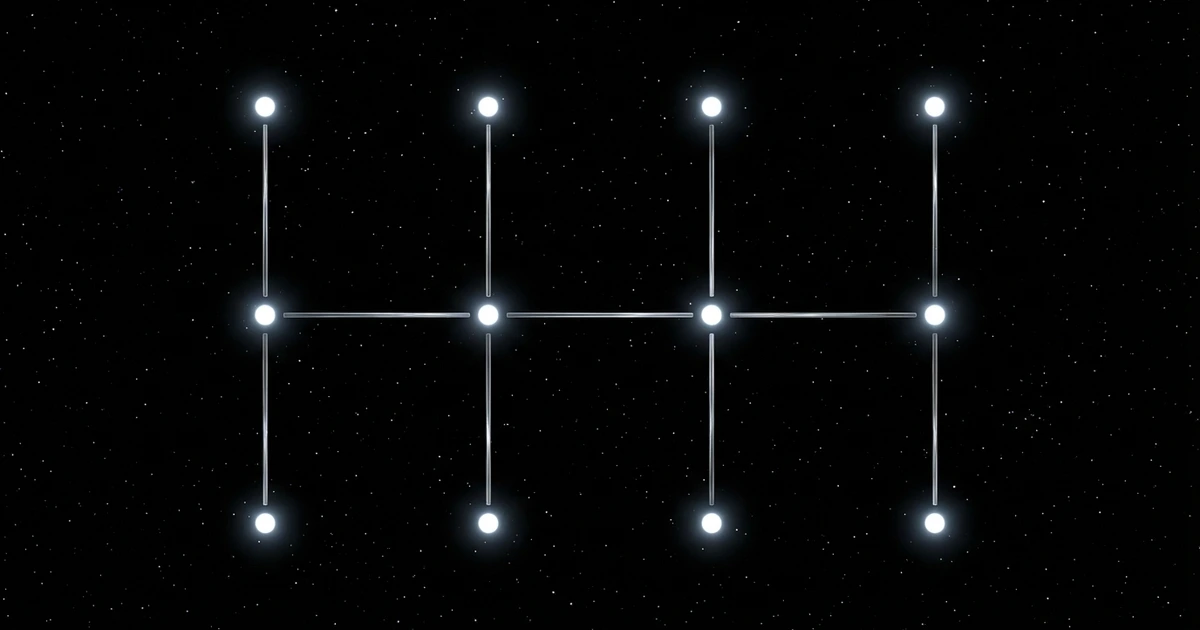

Your agent builder nightmare: it's 3am, your agent should have run, but you have no idea if it worked. This is the accountability gap that breaks trust in AI agents. CueAPI solves this with a simple flow: you schedule a cue for your agent, our system delivers it at the right time, then confirms the outcome and reports back to you. Real accountability means knowing your agents did what they promised to do, when they promised to do it.

TL;DR: AI agents often fail silently without users knowing, creating an accountability gap that undermines trust. CueAPI addresses this by providing a scheduling system that ensures agents run when expected and users receive confirmation of execution.

Key Takeaways: - 62% of engineering teams experienced silent scheduling failures affecting production in 2023, with most taking over 24 hours to detect - The accountability gap manifests in 3 ways: agents never start, agents start but fail, or agents succeed but produce wrong outcomes - CueAPI closes the gap with Schedule → Deliver → Confirm flow that tracks both execution and business outcomes - Traditional cron schedulers only understand exit codes, not whether your agent actually accomplished its intended work

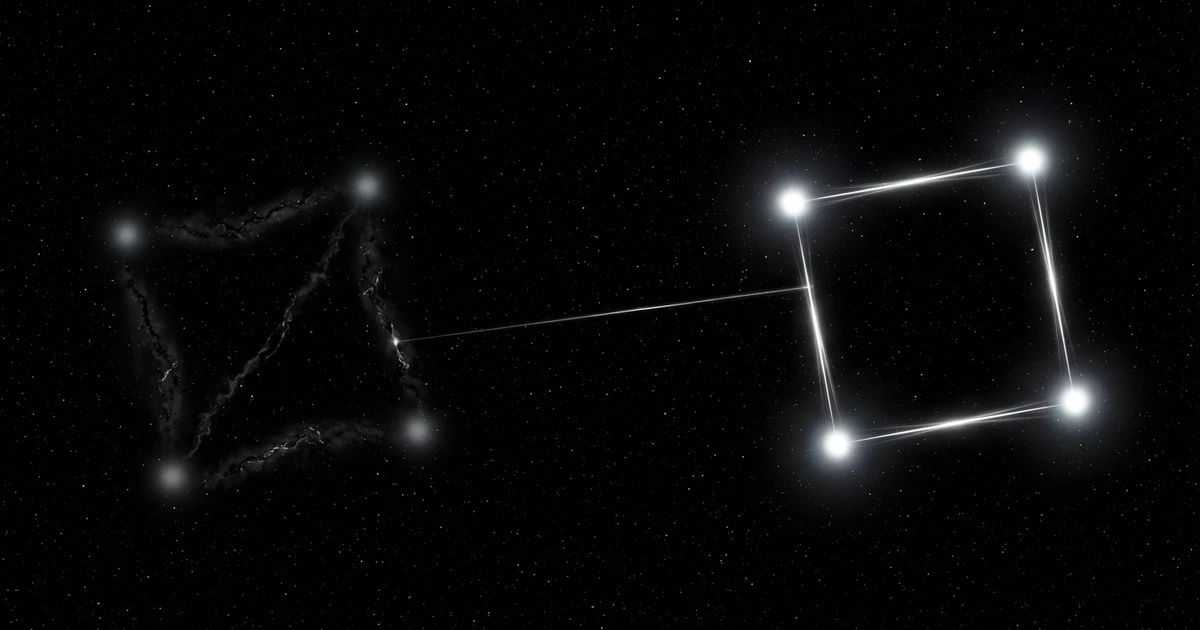

You built an agent that pulls overnight data, processes customer requests, and updates your dashboard every morning at 6am. For three weeks, it worked perfectly. Then one Monday, you check your dashboard and see Friday's data. Your agent went quiet over the weekend. No error. No alert. No trace.

Welcome to the accountability gap - the space between "my agent ran" and "my agent worked." This gap exists because most agents run on infrastructure that has no concept of success or failure beyond basic process execution.

The 3am Accountability Gap

Picture this scenario. Your agent runs a critical data sync at 3am. The scheduler fires the job. Your Python process starts. Halfway through, the API rate limit kicks in. The script exits. The scheduler logs nothing meaningful. The next morning, your downstream systems use stale data.

You discover the problem when a customer notices before you do.

This is not a hypothetical edge case. A 2023 survey by Cronitor found that 62% of engineering teams had experienced silent scheduling failures that affected production in the past year. Most took over 24 hours to detect.

The problem is fundamental: cron has no concept of delivery vs outcome. It fires jobs and forgets them. For agents that need to work reliably, this creates an accountability gap that traditional infrastructure cannot close.

What Actually Goes Wrong

The accountability gap manifests in three ways.

The agent never starts. The cron daemon crashed. The machine rebooted. The crontab got corrupted during deployment. Traditional schedulers cannot distinguish between "agent ran" and "agent was supposed to run." There is no execution visibility for missed runs.

The agent starts but fails. Your process exits with an error code. Cron captures stderr and sends it to a local mailbox that nobody monitors. In 2026, who configures local mail on servers? The failure vanishes into digital silence.

The agent succeeds but the outcome is wrong. The script ran and exited cleanly. But the API returned empty data. The database write silently dropped rows. Traditional schedulers only understand exit codes, not business outcomes. Exit code 0 tells you nothing about whether your agent actually worked.

# This is all cron gives you

* * * * * /usr/bin/python3 /app/agent_sync.py >> /var/log/agent.log 2>&1That >> /var/log/agent.log is the entire accountability story. No structured logging. No delivery confirmation. No outcome tracking. If the disk fills up, you lose even that minimal visibility.

Closing the Accountability Gap

Your agents deserve infrastructure that matches their sophistication. You spend time on model selection, tool frameworks, and evaluation harnesses. But the infrastructure that actually runs your agents is stuck in 1975.

Consider what agent accountability requires:

| Requirement | Cron | CueAPI |

|---|---|---|

| Fires on schedule | Yes | Yes |

| Confirms delivery | No | Yes |

| Retries on failure | No | Yes (3x, exponential backoff) |

| Tracks outcomes | No | Yes (success/failure reporting) |

| Alerts on missed execution | No | Yes |

| Execution visibility | No | Yes (API logs) |

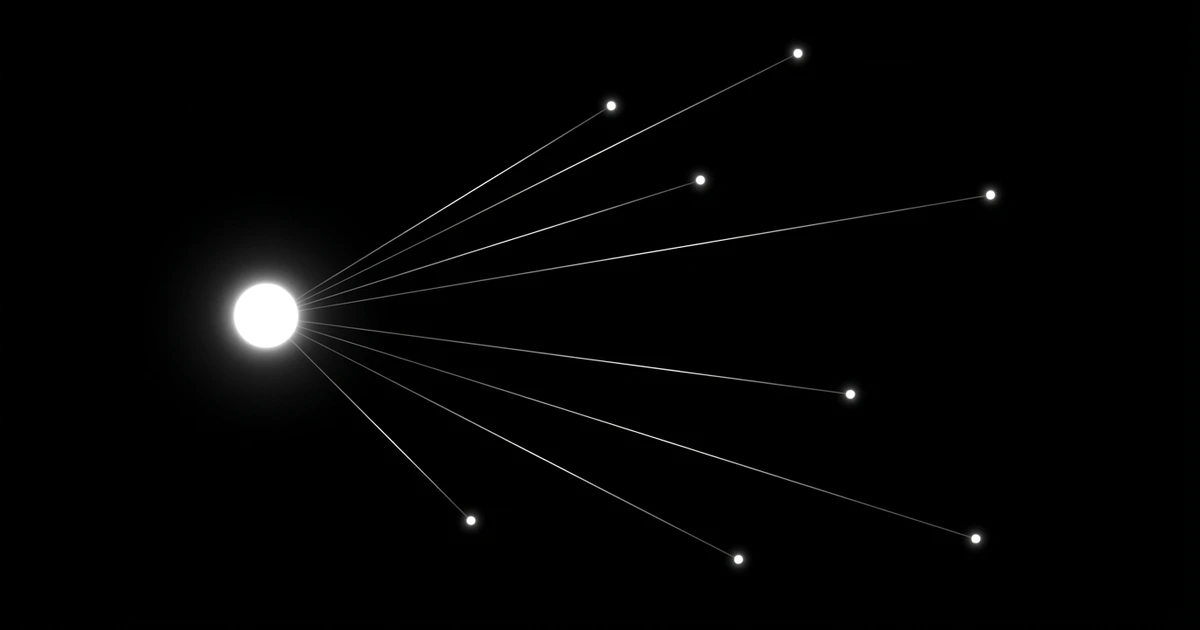

The gap is not your agent code. The gap is the accountability layer. Your agent is only as reliable as the system that triggers it and tracks its success.

What Accountability Looks Like

Replace the cron job with a cue. A cue is a scheduled task with delivery confirmation, retries, and outcome tracking built in. It runs anywhere your agents run.

curl:

curl -X POST https://api.cueapi.ai/v1/cues \

-H "Authorization: Bearer cue_sk_..." \

-H "Content-Type: application/json" \

-d '{

"name": "nightly-data-sync",

"description": "Sync agent data at 3am",

"schedule": {

"type": "recurring",

"cron": "0 3 * * *",

"timezone": "UTC"

},

"transport": "webhook",

"callback": {

"url": "https://myapp.example.com/hooks/sync",

"method": "POST",

"headers": {"X-Agent": "data-sync-v2"}

},

"payload": {"agent": "data-sync-v2"},

"retry": {

"max_attempts": 3,

"backoff_minutes": [1, 5, 15]

}

}'Python:

import httpx

import os

client = httpx.Client()

response = client.post(

"https://api.cueapi.ai/v1/cues",

headers={"Authorization": f"Bearer {os.environ['CUEAPI_API_KEY']}"},

json={

"name": "nightly-data-sync",

"description": "Sync agent data at 3am",

"schedule": {

"type": "recurring",

"cron": "0 3 * * *",

"timezone": "UTC"

},

"transport": "webhook",

"callback": {

"url": "https://myapp.example.com/hooks/sync",

"method": "POST",

"headers": {"X-Agent": "data-sync-v2"}

},

"payload": {"agent": "data-sync-v2"},

"retry": {

"max_attempts": 3,

"backoff_minutes": [1, 5, 15]

}

}

)

cue = response.json()

print(f"Cue created: {cue['id']}")When 3am arrives, CueAPI sends a POST to your webhook. If your handler returns a 5xx or times out, CueAPI retries automatically. Three attempts with exponential backoff. Every attempt is logged with full execution visibility.

After your agent finishes processing, report the verified success:

curl:

curl -X POST https://api.cueapi.ai/v1/executions/exec_abc123/outcome \

-H "Authorization: Bearer cue_sk_..." \

-H "Content-Type: application/json" \

-d '{

"success": true,

"result": "Synced 14832 rows in 4.2 seconds",

"metadata": {"rows_synced": 14832, "duration_ms": 4200}

}'Python:

import httpx

import os

def report_outcome(execution_id, success, result, metadata=None):

client = httpx.Client()

response = client.post(

f"https://api.cueapi.ai/v1/executions/{execution_id}/outcome",

headers={"Authorization": f"Bearer {os.environ['CUEAPI_API_KEY']}"},

json={

"success": success,

"result": result,

"error": None,

"metadata": metadata or {}

}

)

return response.json()

# After your sync completes

report_outcome(

execution_id="exec_abc123",

success=True,

result="Synced 14832 rows in 4.2 seconds",

metadata={"rows_synced": 14832, "duration_ms": 4200}

)Now you know three things cron never told you: the webhook was delivered, the handler ran, and the sync actually worked. Check the CueAPI execution logs via the API to see every attempt, delivery time, and verified success.

The Real Cost of the Accountability Gap

When agents fail silently, trust erodes. Teams start building workarounds. Someone writes a script to check if the agent output looks right. Someone else sets up monitoring to watch the monitoring. Before long, you have three layers of duct tape around infrastructure that was never designed for agent accountability.

CueAPI tracks both delivery and outcome as separate signals. Delivery means the webhook reached your handler. Outcome means your handler confirmed the task succeeded. Two signals, zero ambiguity about whether your agent worked.

The free tier includes 10 cues and 300 executions per month. Enough to make every critical agent accountable and know, with certainty, whether each one worked.

Make Your Agents Accountable

Cron is fine for rotating logs. It is not fine for agents that businesses depend on. If you have ever discovered your agent went quiet by accident, by a customer complaint, or by a coworker asking "hey, is the sync still running?" then you already understand the accountability gap.

The solution is infrastructure that treats agent success as a first-class concern. Not an afterthought you discover during an incident.

Make your agents accountable. Know they worked. Get on with building.

FAQ

Q: How is this different from just adding logging to my cron job? A: Logging tells you what happened after you think to check. Accountability tells you immediately when something goes wrong and gives you structured data about every execution.

Q: Can I use this with existing agent frameworks? A: Yes. CueAPI works with any HTTP-accessible agent. OpenAI Functions, Replit agents, Claude tools - if it can receive a webhook, it can be made accountable.

Q: What happens if CueAPI itself goes down? A: CueAPI is designed for high availability, but you can configure backup webhooks and fallback schedules. The accountability layer should not become a single point of failure.

Q: How much does reliable agent scheduling cost? A: The free tier covers 10 cues and 300 executions monthly. Most teams start there. Paid plans begin at $10/month for production workloads.

Q: Can I migrate existing cron jobs gradually? A: Yes. Start with your most critical agents. CueAPI cues use standard cron syntax for scheduling, so migration is typically a few lines of code change.

Related Articles

- Silent Failures Are Expensive - The cost of the accountability gap

- Scheduled Task Failed No Notification - Why agents die silently

- Cron Has No Concept of Success - The fundamental problem with cron

Frequently Asked Questions

What is the accountability gap in AI agents?

The accountability gap is the space between "my agent ran" and "my agent worked." It occurs when agents fail silently without users knowing, creating situations where scheduled tasks appear to execute but actually fail to accomplish their intended work. This gap undermines trust in AI agents because traditional schedulers only track process execution, not business outcomes.

How common are silent cron job failures?

According to a 2023 survey by Cronitor, 62% of engineering teams experienced silent scheduling failures that affected production systems. Most teams took over 24 hours to detect these failures, highlighting the widespread nature of this problem in production environments.

What are the three main ways silent cron failures manifest?

Silent failures occur when: (1) the agent never starts due to system issues like cron daemon crashes or corrupted crontabs, (2) the agent starts but fails during execution due to API limits or other errors, and (3) the agent completes execution but produces incorrect or incomplete results. Traditional monitoring only catches exit codes, missing the deeper business logic failures.

How does CueAPI solve the silent failure problem?

CueAPI uses a Schedule → Deliver → Confirm flow that tracks both execution and business outcomes. Instead of just firing jobs and forgetting them like traditional cron, CueAPI delivers scheduled cues to your agents, monitors their execution, and confirms whether they accomplished their intended work before reporting back to you.

Why can't traditional cron schedulers detect when agents fail to accomplish their work?

Traditional cron schedulers only understand process exit codes, not business logic success. They can tell if a script crashed, but they cannot determine if your agent successfully pulled the right data, processed it correctly, or updated systems as intended. This limitation creates blind spots where agents appear to run successfully while actually failing their core objectives.

Sources

About the Author

This article was written by the CueAPI team, builders of reliable infrastructure for AI agents. CueAPI makes scheduled tasks accountable with delivery confirmation, retries, and outcome tracking.

Agent accountability doesn't have to be a black box. With proper monitoring, clear success criteria, and reliable alerting systems, you can build agents that businesses actually trust. The path forward is simple: Schedule your agent tasks with precision, Deliver them reliably when needed, Confirm the outcomes with detailed monitoring. Make your agents accountable.

About the Author

This article was written by the CueAPI team, builders of reliable infrastructure for AI agents. CueAPI makes scheduled tasks accountable with delivery confirmation, retries, and outcome tracking.