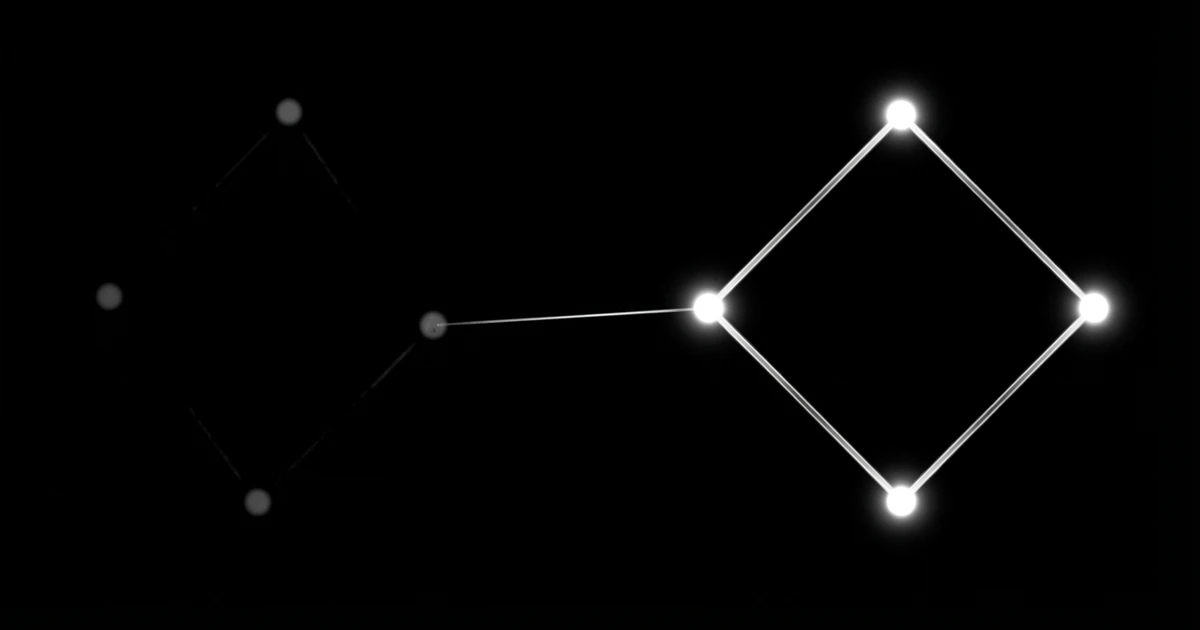

Your AI agent processed customer data at 3am. At least, you think it did. You won't know until a customer complains or you manually check logs. By then, it's been failing for three days. Here's what accountability looks like: Schedule your agent to send a cue when it completes tasks. Deliver that confirmation to your monitoring system. Confirm the outcome and know immediately when things break. This simple flow turns silent failures into visible, actionable alerts.

This is the accountability gap: the space between when your agent runs and when you know it worked. Traditional infrastructure provides no immediate feedback, leaving you blind to failures until they compound into customer-facing problems.

TL;DR: The accountability gap leaves AI agents running without verification of success or failure. Modern scheduling APIs provide immediate feedback through webhooks, letting you catch issues before customers do. The difference between debugging for hours versus knowing instantly what broke.

Key Takeaways: - The accountability gap causes AI agents to fail silently for days, with developers only discovering issues when customers complain or through manual log checking - Modern accountability follows Schedule → Deliver → Confirm flow, turning silent failures into visible, actionable alerts through immediate feedback systems - One customer's invoice generation agent failed nightly for 2 weeks undetected, showing thousands in missing revenue when finally reported - API-based schedulers provide verified success tracking and real-time failure detection, eliminating the need for manual monitoring of multiple agents

The Silent Failure Problem

Cron has no concept of success. It executes your command and moves on. Whether your agent's cron job failed spectacularly or completed perfectly, cron treats both the same.

This silent operation creates the accountability gap. Your scheduled task could be failing every hour for days. No alerts. No notifications. Just quiet failure accumulating in the background while you remain unaware.

Most developers discover these failures by accident. A user reports stale data. A weekly report doesn't arrive. An integration stops working. The failure happened days ago, but you're finding out now.

Why Traditional Monitoring Falls Short

Traditional monitoring focuses on resource usage, not verified success. Your server might be healthy while your scheduled tasks fail silently. CPU usage looks normal. Memory is fine. But your agent isn't processing new data.

Manual log checking doesn't scale. With multiple agents running different schedules, manually verifying success becomes impossible. You need automated failure detection that runs anywhere and provides immediate feedback.

External monitoring tools require additional infrastructure and maintenance. Each tool introduces complexity and potential failure points, widening the accountability gap rather than closing it.

The Real Cost of Missed Failures

Silent failures compound over time. A data sync that fails Monday affects Tuesday's processing. By Friday, you're dealing with a week of accumulated errors instead of fixing one simple timeout.

Customer trust erodes with each missed execution. Users expect reliable automation. When your agent fails to process their requests, they lose confidence in your entire system.

Debugging stale failures wastes engineering time. Tracking down a three-day-old error requires recreating historical conditions. Fresh failures are easier to diagnose and fix.

Real example: A customer's invoice generation agent failed every night for two weeks. The finance team manually created invoices without telling engineering. The billing system showed thousands in missing revenue when they finally reported the issue.

How Modern Job Schedulers Handle Failures

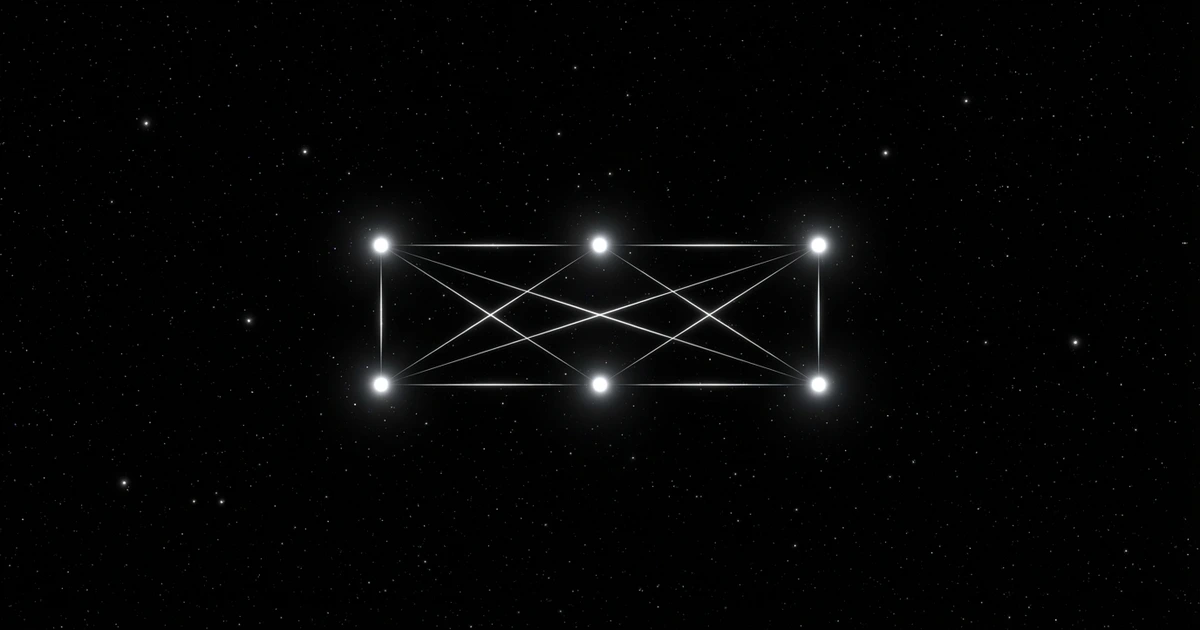

API-based schedulers know when jobs succeed or fail. They track execution results, capture error details, and provide immediate feedback. This visibility transforms debugging from archaeology to real-time problem solving.

Built-in retry logic handles transient failures automatically. Network timeouts and temporary service unavailability resolve without manual intervention. Only persistent failures require human attention.

Structured logging captures execution context. You get error messages, execution duration, and failure timing without setting up separate logging infrastructure.

Built-in Failure Detection

Modern schedulers monitor HTTP response codes. A 200 response indicates verified success. Anything else triggers failure handling. This simple contract eliminates guesswork about job status.

Execution timeouts prevent hanging jobs. If your agent doesn't respond within the configured time, the scheduler marks it as failed and can retry. No more zombie processes consuming resources indefinitely.

Return code analysis works for command-line tools that run anywhere. Exit code 0 means success. Non-zero codes indicate failure. The scheduler can parse these codes and route failures to appropriate handlers.

Notification Systems That Actually Work

Webhook notifications deliver instant alerts. When a job fails, the scheduler immediately posts failure details to your monitoring system. No polling. No delays. Instant awareness.

Slack integration puts failures in your team channel. Everyone sees the alert. Context switching from debugging to collaboration happens naturally. No email chains or ticket systems required.

PagerDuty escalation handles critical failures. Important jobs can trigger on-call alerts if initial notifications don't get immediate attention. Your scheduling API becomes as reliable as your core services.

Setting Up Reliable Failure Notifications

Webhook endpoints receive structured failure data. The payload includes job ID, failure reason, execution time, and retry count. Your alerting system gets everything needed to diagnose and route the issue.

Notification filtering prevents alert fatigue. Configure different alert levels for different job types. Critical agent processes trigger immediate alerts. Non-critical tasks might only notify during business hours.

Integration with existing tools requires minimal setup. Most scheduling APIs support popular notification services out of the box. Connect your Slack, Discord, or email system with a single configuration change.

Webhook Endpoints for Instant Alerts

Failure webhooks contain actionable information. Instead of generic "job failed" messages, you get specific error codes, execution logs, and suggested next steps. Debugging starts immediately instead of after log hunting.

Success webhooks confirm verified success. Positive confirmation that important jobs finished successfully eliminates uncertainty. Your morning standup discusses new features instead of wondering whether yesterday's data sync worked.

Custom webhook handlers enable sophisticated routing. Route database failures to the infrastructure team. Route data processing failures to the product team. The right people get the right alerts automatically.

Monitoring Patterns That Scale

Failure rate monitoring tracks job reliability over time. Trending data shows whether changes improve or degrade job success rates. Performance regressions become visible before they impact users.

Downstream dependency tracking maps job relationships. When the data ingestion job fails, automatically alert teams whose jobs depend on that data. Cascading failure awareness prevents wasted debugging effort.

Alert grouping reduces notification spam. Multiple failures from the same root cause generate a single grouped alert instead of flooding your notification channels. Signal-to-noise ratio stays high.

Real-World Impact of Silent Failures

E-commerce inventory updates that fail silently show unavailable products as in stock. Customers place orders for items you can't fulfill. Revenue impact compounds with each missed sync cycle.

Financial reconciliation jobs that fail without notification create audit problems. Month-end closing requires manual verification of automated processes. What should take hours extends to days of manual checking.

Customer data synchronization failures break personalization features. Users see generic content instead of tailored experiences. Engagement drops while you remain unaware of the underlying sync failures.

Compare this to knowing immediately when something breaks. Fix the timeout. Restart the failed job. Customers never notice the brief interruption. This is the difference between reactive firefighting and proactive maintenance.

Reliable scheduling APIs let you focus on agent capabilities instead of infrastructure debugging. When you know every execution succeeded or failed immediately, building becomes the primary activity instead of monitoring.

FAQ

Q: How quickly should I get notified when a scheduled task fails? A: Immediately via webhook. Critical failures should trigger alerts within seconds, not minutes. The faster you know, the faster you can fix issues before they impact users.

Q: What information should failure notifications include? A: Job ID, failure reason, timestamp, retry count, and execution logs. Enough context to start debugging immediately without hunting through multiple systems.

Q: Should I get notified for every job failure or only repeated failures? A: Configure based on job criticality. Data processing jobs should alert on first failure. Non-critical cleanup tasks might only alert after multiple consecutive failures.

Q: How do I prevent alert fatigue from too many notifications? A: Use notification filtering and grouping. Route different job types to different channels. Group related failures into single alerts. Adjust frequency based on job importance.

Q: Can I integrate failure notifications with my existing monitoring tools? A: Yes, most modern scheduling APIs support webhook notifications that integrate with Slack, PagerDuty, email, and custom monitoring systems. Setup typically requires just configuring an endpoint URL.

Make your agents accountable. Know they worked. Get on with building.

Make your agents accountable. Free to start.

Related Articles

- Why Your Agent's Cron Job Failed - The accountability gap explained

- Silent Failures Are Expensive - What silent failures cost

- Make Your Agents Accountable - Close the gap in 30 minutes

Frequently Asked Questions

What is the accountability gap in scheduled tasks?

The accountability gap is the space between when your AI agent runs and when you know whether it actually worked. Traditional scheduling systems like cron execute tasks but provide no feedback about success or failure, leaving you blind to issues until customers complain or you manually discover problems days later.

Why don't traditional monitoring tools catch scheduled task failures?

Traditional monitoring focuses on server health metrics like CPU and memory usage rather than verifying task completion. Your server can appear perfectly healthy while your scheduled tasks fail silently, making resource-based monitoring ineffective for detecting agent execution problems.

How long do silent failures typically go undetected?

Silent failures can persist for days or even weeks without detection. One customer's invoice generation agent failed nightly for 2 weeks before being reported, resulting in thousands of dollars in missing revenue. Most developers only discover these issues when users report problems or during manual log reviews.

What's the difference between cron and modern API-based schedulers?

Cron executes commands and moves on with no concept of success or failure verification. Modern API-based schedulers provide immediate feedback through webhooks and verified success tracking, allowing you to catch issues instantly rather than discovering them through manual checking or customer complaints.

How can I implement accountability for my scheduled tasks?

Follow the Schedule → Deliver → Confirm flow by having your agent send confirmation when tasks complete, delivering that confirmation to your monitoring system, and confirming the outcome immediately. This transforms silent failures into visible, actionable alerts that let you fix problems before they compound into customer-facing issues.

Sources

- CueAPI Documentation - Complete API reference and guides

- CueAPI Quickstart - Get your first cue running in 5 minutes

- CueAPI Worker Transport - Run agents locally without a public URL

About the Author

Govind Kavaturi is co-founder of Vector Apps Inc. and CueAPI. Previously co-founded Thena (reached $1M ARR in 12 months, backed by Lightspeed, First Round, and Pear VC, with customers including Cloudflare and Etsy). Building AI-native products with small teams and AI agents. Forbes Technology Council member.